We built a recipe site with hundreds of pages.

Everything worked perfectly — for visitors.

But for Google, the site was virtually empty.... I searched in Search Console...

Only when we examined the HTML source did we see the problem:

the entire site was a React app where the real content only appeared after JavaScript execution.

The Architecture Built by AI

When we started building pb.nl/recepten, we primarily wanted one thing: to go live quickly.

With the help of AI and Replit, a complete site was up in no time:

hundreds of recipes

filters and categories

a CMS

a modern interface

The technical stack that emerged is quite common nowadays:

React + Express

This means the site was built as a Single Page Application (SPA).

For dashboards and tools, this works excellently.

But for a content site with hundreds of pages, it works differently. And Google needs to generate a page to see it, so to speak....

The Result

In Google Search Console, the notifications started coming in:

Soft 404

Page indexed without content

Crawled – currently not indexed

When we reviewed the overview, the problem was larger than expected.

More than 700 pages were not indexed.

The site worked perfectly for visitors,

but for Google, it barely existed, really frustrating.....

Performance Told the Same Story

When we measured the site, we saw the same pattern.

Because all content had to be loaded via JavaScript:

First Contentful Paint: ±6.4 seconds

Largest Contentful Paint: ±9.6 seconds

hundreds of kilobytes of JavaScript before anything was visible

The site functioned technically as a web application, not as a content website.

The Temporary Solution

Our first reaction was a quick fix.

We injected server-side HTML outside of React, so Google could read something.

That worked partially.

Google suddenly started recognising recipes.

But it remained a hack on top of an architecture that wasn't really meant for this.

The Real Solution

Therefore, we decided to tackle the problem at its source. I set Claude to work on a plan and had ChatGPT think along, so two AIs convinced me to make the right choices.

We changed not only the code but also the rendering strategy of the site.

The solution was Next.js.

Next.js can generate pages server-side or statically, making the HTML immediately complete when a crawler opens the page.

So when Google now requests a page, it immediately sees:

the title

the ingredients

the preparation steps

the recipe structured data

Without executing JavaScript and perfectly reading all components after checking via https://search.google.com/test/rich-results.

React SPA vs Next.js

The difference between the two approaches is actually quite simple.

React SPA:

Browser → HTML empty

→ Load JavaScript

→ Fetch API

→ React render

→ Content visible

Next.js:

Browser → HTML complete

→ Content directly visible

→ JavaScript only for interaction

For search engines, that makes a world of difference.

What Has Changed Now

After the migration, the site looks almost the same to visitors.

But under the hood, everything has changed.

The recipes are now statically generated.

Instead of a React app fetching content, each page gets its own HTML.

In our case, this means:

770+ static pages

server-side HTML

recipe structured data

delivery via a CDN

Pages now load almost instantly, really fast and a significant difference in experience, I find.

And more importantly: search engines can finally read them fully.

What I've Learned from This

AI can now build a working application at lightning speed.

But AI usually builds apps.

Not automatically SEO-friendly content sites.

For tools and dashboards, an SPA is perfect.

For a site with hundreds of articles or recipes, one principle remains important:

HTML that is directly readable.

Where We Stand Now

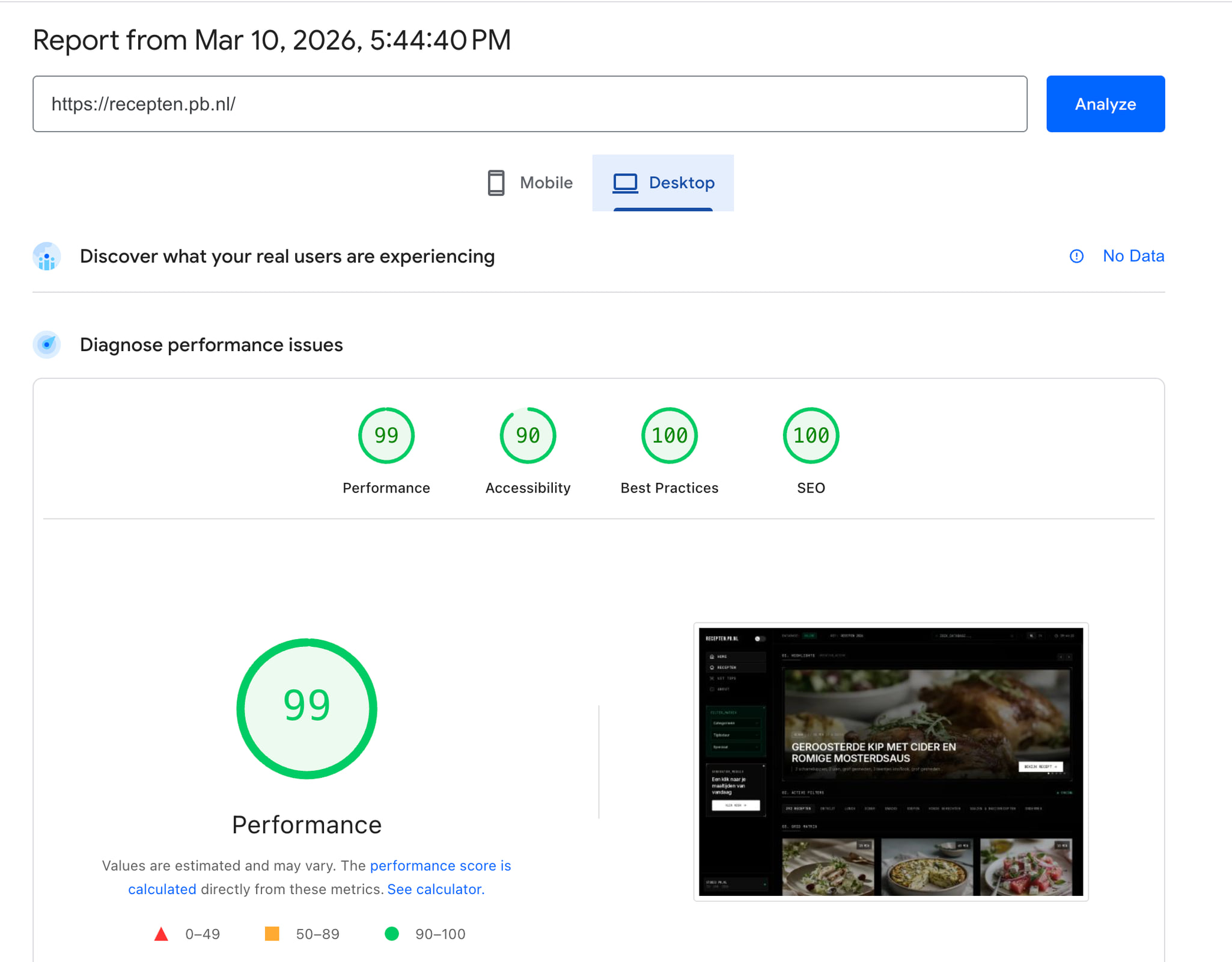

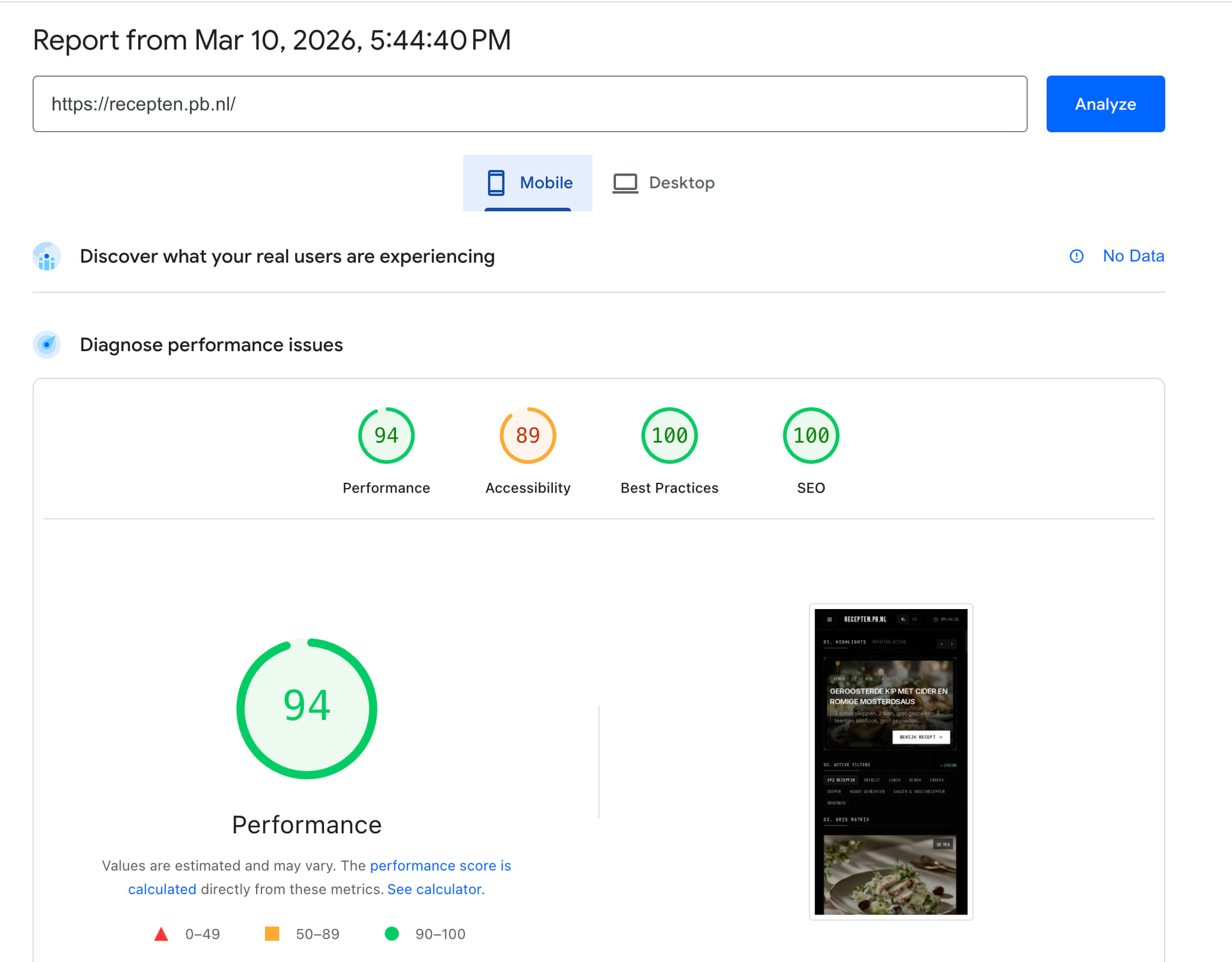

The new site now runs as a Next.js build with statically generated pages.

The first tests show that Google can now recognise the recipes directly, and we are really much faster in loading.

All in all, Claude code (terminal) with 3 extra agents spent about 5 hours with me as a tester constantly working to rebuild everything front-end and CMS, I keep noticing that you have to name and check everything like a hawk, as they leave out components, and it was also a moment; (had bypass on, so it went like wildfire) OOPS! front-end already live....... But well, almost everything was there, so left it as is, a bit warm but just went ahead. And our JSON auto uploads (for recipes) were gone and image compression... phew.... almost fixed now :)

But I really went through everything, and now this speed score! Never had it!! almost everything 100% :)

Now Waiting for Google, everything resubmitted in Search Console.

Endoor

Peet.